News & Talks

IX Lab YouTube channel: https://www.youtube.com/@UTAustinIXLab

Can eye-tracking reveal whether Interactive AI systems are more engaging?

IX Lab CHIIR 2022 paper: “The Effects of Interactive AI Design on User Behavior: An Eye-tracking Study of Fact-checking COVID-19 Claims”

Can eye-tracking reveal whether Interactive AI systems are more engaging?

Ph.D. Student’s Yung-Sheng Chang Talk at ACM SIGIR CHIIR 2022: “Perceived eHealth Literacy vis-a-vis Information Search Outcome: A Quasi-Experimental Study”

Health Informtion Seeking study: How do eye-movement patterns differ between people high and low on e-Health literacy (e-Heals)?

Can AI say from our eyes when we read relevant information?

Ph.D. Student’s Nilavra Bhattacharya: Talk at ACM SIGIR CHIIR 2020 (March 2020): “Relevance Prediction from Eye Movements Using Semi-interpretable Convolutional Neural Networks”

YASBIL – Yet Another Search Behaviour and Interaction Logger – SIGIR 2021

Ph.D. Student’s Nilavra Bhattacharya Demo Talk at ACM SIGIR 2021: “YASBIL – Yet Another Search Behaviour and Interaction Logger”

YASBIL v1.0 – How to Use

Ph.D. Student’s Nilavra Bhattacharya Demo YASBIL – How to use”

Neuro-physiological evidence as a basis for understanding human-information interaction

Dr. Jacek Gwizdka: Talk at UT Applied Research Lab (December 2019): “Neuro-physiological evidence as a basis for understanding human-information interaction”

Dr. Jacek Gwizdka – “Neuro-Physiological Evidence as a Basis for Studying Search”

Dr. Jacek Gwizdka: Talk at Rutgers University (December 2015): “Neuro-Physiological Evidence as a Basis for Studying Search”

IX Lab podcast is available on all major services: Amazon Music, Spotify, Apple Podcasts and others.

Selected episodes are available on IX Lab YouTube channel: https://www.youtube.com/@UTAustinIXLab

Popularizing research in Information eXperience Lab at The University of Texas at Austin

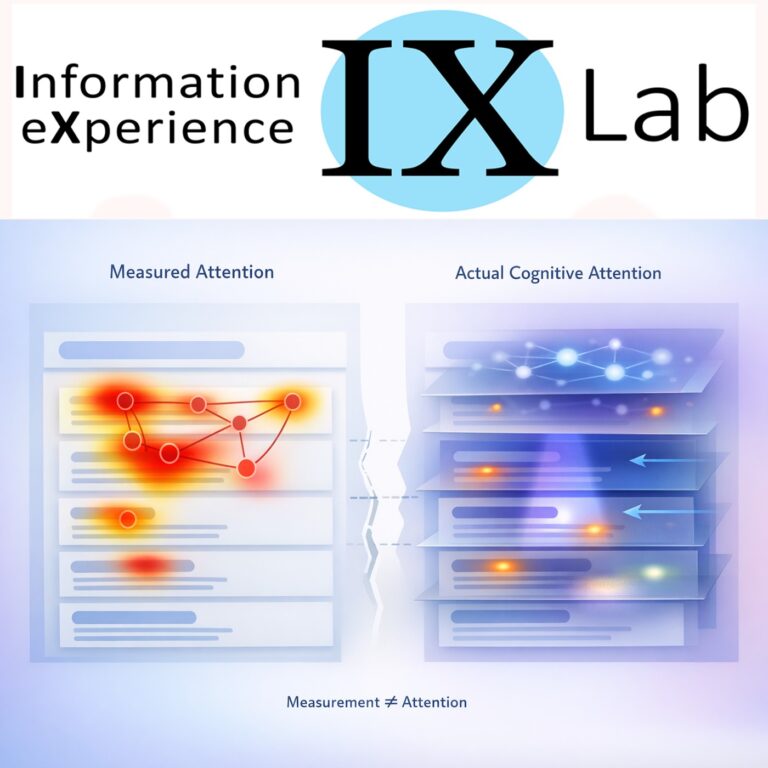

Zhang, D., Jayawardena, G., & Gwizdka, J. (2026). Attention! Rethinking What We Measure in CHIIR Studies. Proceedings of the 2026 Conference on Human Information Interaction and Retrieval, CHIIR ’26, 100–110. https://doi.org/10.1145/3786304.3787944

(C) is held by the paper authors.

Conversation in this podcast is generated by AI NotebookLM.google from the full text of the paper. Illutration generated by ChatGPT. Music by (C) Jacek Gwizdka.

IX Lab website: https://ixlab.us/

Dr. Jacek Gwizdka: https://jacekg.ischool.utexas.edu/